Electronic Bill of Rights is Free Information for Tech Safety and Privacy Advocates

Electronic Bill of Rights is Free Information for Tech Safety and Privacy Advocates

Surveillance Capitalism- The Information Trafficking Industry Explained

Google, Apple, Microsoft, Meta, TikTok, Amazon, and other tech giants, including those from China and Russia are the largest data brokers in the world who develop operating systems, plus highly addictive and dangerous apps, AI, social media, chatbots, and other platforms to monitor, track, and data mine their paying customers for profits without compensating the customer who produces the product, their confidential personal and business information.

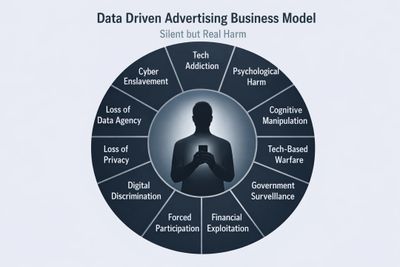

Most discussions about tech addiction, online exploitation, and the loss of privacy, civil liberties, and human rights associated with the use of highly addictive apps, AI, social media, and chatbots focus on individual symptoms rather than the system that creates them: the multi-trillion-dollar-per-year information trafficking industry known as Surveillance Capitalism.

Surveillance Capitalism is a business model centered on the control, manipulation, and exploitation of adults, teens, and children through phones, computers, connected products supported by operating systems, apps, AI, social media platforms, and AI chatbots that have become necessities of modern life, as much of today's global trade, commerce, communication, education, healthcare, banking, and entertainment is conducted through the internet.

Addictive, manipulative, and intrusive design has become essential for advertising agencies, plus operating system, app, AI, and platform developers.

Unbeknownst to most end users, many AI-infused apps supporting phones, computers, and connected products are designed not only to provide functionality, but also to enable developers to monitor, track, data mine, influence, and manipulate user behavior for profits generated through targeted advertising.

As a result, the owners of smartphones, computers, and connected products end up funding their own surveillance, data mining, consumer exploitation, and behavioral manipulation through the very products and services they pay for.

This extends beyond the individual user to include family members, coworkers, and children whose information may also be collected, analyzed, and monetized at the expense of their privacy, security, and safety.

This is morally wrong on many levels because the product owner not only pays for the product and service but also produces a valuable commodity—their personal and business information. That information is then collected, aggregated, shared, and monetized by manufacturers, developers, advertisers, data brokers, and third-party partners through predatory terms of service that form contracts of adhesion, forcing participation through coercion rather than through informed and meaningful consent.

While Surveillance Capitalism is primarily fueled by the targeted advertising industry, it extends far beyond advertising. It has evolved into a global system of behavioral influence, data extraction, and control delivered through everyday consumer and enterprise telecommunications and technology products. At the center of this ecosystem are phones, computers, and connected products supported by Android, iOS, and Windows—the dominant operating systems developed by Google, Apple, and Microsoft that serve as the primary gateways to the digital world.

Google, Apple, and Microsoft control access to global internet trade and commerce through the monopolization of the operating system market, plus control over the global development and distribution of AI, apps, social media platforms, digital currency and financial platforms, entertainment and mass media platforms, and AI Chatbots that are required to be developed to support Android, iOS, or Windows to be distributed globally through preinstalled app agreements and through Google Play, Apple App Store, or Microsoft App Store.

The Electronic Bill of Rights (EBOR) addresses the broader digital ecosystem responsible for the harms caused by Surveillance Capitalism, enabled by technology monopolies, protected through lobbying, and delivered through the phones, computers, and connected products billions of people depend upon every day.

The sections below provide a high-level overview of this system. For a detailed analysis, see the report: The Digital Control System: Understanding the Structure Behind Surveillance Capitalism.

The economic engine driving the modern internet is targeted advertising. At the center of this ecosystem are global advertising technologies, including Google's AdTech Core Platform and interconnected advertising networks operating worldwide. These systems collect, analyze, and monetize personal information to maximize engagement, behavioral influence, and advertising revenue.

Most consumers gain access to digital products and services only after accepting lengthy, non-negotiable terms of service. These contracts often create a system of forced participation where meaningful consent is replaced by dependency on essential technologies.

A small number of technology companies dominate operating systems, app distribution, AI development, digital advertising, cloud infrastructure, and online commerce. Through extensive lobbying and political influence, these companies shape legislation, regulation, and enforcement affecting the digital economy.

The growing convergence of governments, technology providers, telecommunications companies, and data-driven platforms raises concerns regarding surveillance, censorship, social control, political influence, and the erosion of civil liberties and human rights through digital systems.

Operating systems, applications, social media platforms, AI systems, and chatbots serve as the primary interfaces through which users interact with the digital world. These technologies increasingly shape behavior, perception, decision-making, and access to information.

The digital ecosystem is delivered through billions of consumer products, including smartphones, computers, wearables, vehicles, smart home devices, and other connected technologies supported by telecommunications and internet infrastructure.

Advertising revenue supports a significant portion of modern media, online content, social platforms, search engines, and digital services. This creates incentives that prioritize engagement, attention, and data collection over transparency, privacy, and user well-being.

The harms associated with tech addiction, surveillance, privacy loss, censorship, manipulation, and the erosion of civil liberties are not isolated problems. They are the predictable outcomes of a larger digital ecosystem built upon surveillance, data extraction, and behavioral influence.

The Electronic Bill of Rights addresses the system itself by restoring sovereignty over a person's Name, Image, Likeness, and Data (NILD) while extending privacy, security, safety, civil liberties, human rights, and digital rights into the AI and Quantum Digital Age.

This gives you a clean website section while allowing the full report to carry the detailed analysis and supporting evidence.

Today, athletes, entertainers, and public figures benefit from legal protections over their Name, Image, and Likeness (NIL), recognizing that their identity has value and should not be exploited without their knowledge, consent, or compensation.

The same principle should apply to every person connected to the internet.

Every day, billions of people generate highly valuable personal and business information through the operating systems, AI infused apps, platforms, phones, computers, wearable devices, vehicles, smart home systems, and other connected products they own and pay for.

This information should be the property of the product owner since they pay for, use, and depend on these products and services for everyday life.

This information—including personal, biometric, behavioral, location, health, communications, and usage data—is produced by the individual through the use of products and services they purchase, yet it is routinely collected, analyzed, monetized, and exploited by third parties without meaningful consent.

Collectively, information such as identification records, addresses, facial recognition data, biometric information, behavioral patterns, location history, and other sensitive personal data is used to create an identifiable user profile based on an individual's Name, Image, Likeness, and Data (NILD).

These identifiable user profiles are generated through the use of phones, computers, applications, social media platforms, AI systems, and other connected products owned and paid for by the individual who produces the data. Yet the resulting NILD profiles are routinely collected, analyzed, monetized, and exploited for profit by technology companies such as Google, Apple, Microsoft, Amazon, Meta, X, ByteDance, and countless third-party data brokers—often without the user's informed consent or compensation.

In many cases, these user profiles are made available through digital advertising ecosystems, where advertisers bid in real time for access to targeted audiences. Through data-sharing arrangements, advertising exchanges, and data brokerage networks, personal information associated with an individual's NILD may be distributed to thousands of organizations, generating billions of dollars in annual revenue.

The fundamental principle behind NILD is that the identifiable user profile—and the data used to create it—originates from the individual. Therefore, individuals should retain ownership, control, and legal rights over the collection, use, licensing, sale, and monetization of their Name, Image, Likeness, and Data.

Additionally, operating system, AI, app, and social media developers often employ highly addictive engagement mechanisms, behavioral targeting techniques, manipulative advertising practices, and AI-driven recommendation systems designed to maximize user attention and screen time. These technologies are frequently embedded within products and services to increase user engagement, data collection, and targeted advertising revenues.

Admissions by AI infused app and social media designers, medical experts and recent social media trials have proven that these systems exploit psychological vulnerabilities, particularly among children and teenagers, contributing to tech addiction, anxiety, depression, social isolation, self-harm, and other documented deaths.

As a result, many believe that the deliberate use of addictive and manipulative digital design practices for commercial gain should be prohibited, especially when such practices prioritize profits over the privacy, safety, and well-being of end users.

Name, Image, Likeness, and Data (NILD) extends these protections beyond celebrities and athletes to include ownership and control over the data individuals create through their daily use of technology.

Under NILD, individuals retain sovereignty over their digital identity and the information they generate. Just as a celebrity has the right to control the commercial use of their name and likeness, every internet user should have the right to control the collection, use, storage, sale, licensing, and monetization of the data they produce.

NILD is a foundational principle of the Electronic Bill of Rights (EBOR). It recognizes that personal data is not the property of technology companies, advertisers, data brokers, app developers, social media platforms, or AI providers. It is the property of the individual who creates it.

By restoring ownership and control of personal data to the individual, NILD addresses the root causes of many of today's digital harms, including tech addiction, mass surveillance, privacy loss, behavioral manipulation, and the erosion of civil liberties and human rights. It challenges business models that depend on surveillance capitalism, targeted advertising, and the exploitation of users through highly addictive AI-infused apps, social media platforms, chatbots, and other digital systems designed to maximize engagement and data extraction.

The goal of NILD is simple: restore privacy, security, safety, data sovereignty, civil liberties, and human rights to the rightful owner of the information—the individual.

Whether adult, teen, or child, every person connected to the internet deserves the same fundamental protections over their digital identity and personal information that athletes and celebrities receive for their name, image, and likeness.

In the digital age, ownership of one's identity must include ownership of one's data.

That is the promise of NILD. That is the foundation of the Electronic Bill of Rights.

Surveillance Capitalism and Targeted Advertising Root Cause of Tech Addiction, Loss of Privacy, Security, Safety, Civil Liberties, and Human Rights

The root cause of tech addiction, along with the loss of privacy, security, safety, civil liberties, and human rights, is the targeted advertising industry that fuels Surveillance Capitalism, combined with the growing convergence of government, multinational telecommunications providers, technology companies, and advertising networks.

This ecosystem creates powerful incentives to collect, analyze, monetize, exploit, and influence human behavior at an unprecedented scale, transforming personal information into a commodity while eroding individual autonomy, informed consent, and digital sovereignty.

The result is a global system of consumer exploitation in which adults, teens, children, and business professionals are increasingly required to surrender control over their personal and business information simply to use essential phones, computers, and connected products needed to participate within today's AI and quantum driven world.

The results have been catastrophic to public safety due to tech addiction, manipulation, plus political, ideological, and consumer indoctrination enabled by highly addictive brain-hijacking technologies, manipulative advertising, and AI-driven influence systems that produce the "Eliza Effect" (AI indoctrination) embedded within AI-infused apps, social media platforms, and chatbots.

These technologies have contributed to a global epidemic of addiction and harm among adults, teens, and children who are exploited for profit at the expense of their privacy, security, and safety.

Much like a pandemic, the effects have spread across societies, impacting mental health, behavior, relationships, education, public discourse, and overall well-being on a massive scale.

In the end, how many adults, teens, and children must be addicted, harmed, or killed for the advertising and tech industry to change their business model, Surveillance Capitalism fueled by targeted advertising?

The Electronic Bill of Rights (EBOR) is not merely privacy legislation or a tech safety initiative. It is a human and civil rights framework for the AI and Quantum Digital Age. EBOR seeks to restore privacy, security, safety, civil liberties, human rights, and both data and financial sovereignty by extending traditional constitutional protections into a world increasingly governed by digital systems, artificial intelligence, and connected technologies.

My name is Rex M. Lee, and I am a security advisor to governments, an OTA app and platform developer, and a former advisor to Congress on technology hearings (2017–2021) involving Meta, Google, ByteDance, and other social media platform developers. During my tenure as a government advisor on congressional technology hearings, I was asked by a sitting U.S. Senator's office to draft an Electronic Bill of Rights from the perspective of an OTA platform developer and technology practitioner rather than from the perspective of lobbyists representing major technology companies.

The objective was to provide policymakers with a real-world understanding of how modern operating systems, applications, platforms, AI technologies, and digital ecosystems function, along with the risks they pose to privacy, security, civil liberties, human rights, and consumer rights. It is important to understand that I am not a paid activist, nor am I seeking donations or financial support. As a matter of fact, I have never charged the U.S. government—or any other government—for my counsel or advisory services.

I believe that tech addiction and the loss of privacy can be significantly reduced by enforcing existing consumer and child protection laws regulated by the Federal Communications Commission (FCC), Federal Trade Commission (FTC), and State Attorneys General, while eliminating the business practices that make Surveillance Capitalism possible, including:

For more than fifteen years, policymakers, regulators, and advocacy groups have focused on the symptoms of the problem rather than the underlying economic engine driving it. Congressional hearings, lawsuits, books, documentaries, and new legislation have increased awareness, yet tech addiction, exploitation, surveillance, manipulation, AI indoctrination, and the erosion of privacy have continued to accelerate while more adults, teens, and children are harmed.

Privacy, security, and tech safety are important concerns, but they are symptoms of a much larger issue: the erosion of individual autonomy, property rights, due process, freedom of expression, and human dignity within a world increasingly governed by consumer technologies supported by telecommunications and internet infrastructure. The Electronic Bill of Rights addresses the structural framework responsible for these harms by restoring sovereignty over a person's Name, Image, Likeness, and Data (NILD) and extending fundamental human and civil rights protections into the digital age.

Contact Rex M. Lee- Free Information

If you are a privacy or technology safety advocate interested in addressing the root causes of tech addiction and the loss of privacy by eliminating Surveillance Capitalism, I encourage you to contact me.

I would be happy to share more than 12 years of research into operating systems, mobile applications, social media platforms, AI systems, chatbots, and other digital technologies.

My research also examines the growing risks associated with civil-military fusion programs, including the potential weaponization of personal information by governments and other actors for surveillance, influence, intelligence, and security-related purposes.

Read the enclosed sections below for more history and information.

Executive Summary- Understanding Harms Caused by Targeted Advertising Rooted in Surveillance Capitalism

The Electronic Bill of Rights (EBOR) is not a theoretical framework—it is grounded in existing federal law.

Before individuals can understand how they are harmed, they must first understand how the surveillance capitalism business model operates through essential telecom and technology products—such as smartphones—that are necessary for modern life and come at a cost.

It is also critical to understand which devices and platforms—including those supported by Android, iOS, and Windows—serve as the primary vectors for surveillance, as well as the scale and scope of data extraction from those devices and services.

The following section explains how this process works, including:

It is not just privacy that is at stake—it is the systematic exploitation of individuals for profit at the expense of their privacy, security, and safety, as well as their data and financial sovereignty, biological autonomy (including biometric and genetic information), civil liberties, and fundamental human rights by way of products and services that cost money.

Modern connected devices—including smartphones, tablet PCs, connected home products, personal computers, servers, wearable technologies, security and environmental systems, connected vehicles, AI-enabled toys, and other digital services—form a pervasive and continuous data-collection environment.

These devices and platforms operate as always-on data collection nodes, enabled by operating systems such as Android, iOS, and Windows, as well as AI-infused applications, social media platforms, and chatbots.

Within this ecosystem:

This model is primarily supported by data-driven business practices centered on targeted advertising, often described as surveillance-based monetization.

Participation in this ecosystem is frequently governed by non-negotiable terms of service (contracts of adhesion), where access to essential products and services is conditioned on acceptance of broad data collection and use policies.

Data collected from individuals—including teens, children, and business professionals—is often facilitated through what can be described as “leaky operating systems” (Android, iOS, and Windows). These systems support a broad ecosystem of AI-infused applications, social media platforms, and AI chatbots that are designed to drive engagement and data collection at scale.

Within this framework, many of these applications and platforms function in ways that may be characterized as “legal malware”—software that operates within the bounds of the predatory contract of adhesion (terms of service) while enabling extensive data extraction, behavioral tracking, and monetization.

These operating systems and their associated ecosystems underpin essential connected telecom and technology products—including smartphones, computers, and other digital services—that are now required for participation in modern life.

The surveillance and data mining being conducted by Alphabet (Google), Apple, Microsoft, Meta, TikTok USDS JV, ByteDance (China), Wildberries (Russia), and others is "indiscriminate" meaning they are collecting from individuals, businesses, and government agencies is the following buckets of highly confidential information (over 5,000 data points):

All data collected from individuals—including teens and children—is aggregated into identifiable digital profiles, often referred to as Digital DNA. These profiles represent detailed behavioral, personal, and usage patterns tied to a specific individual.

Operating system providers and developers—including those behind Android, iOS, and Windows—enable the creation of these identifiable user profiles through system-level data access, application activity, and integrated services. These profiles are then leveraged for monetization, primarily through targeted advertising, as well as other commercial uses, including data sharing and resale.

In addition to data collected directly from device owners and end users, operating system providers, app developers, and AI platforms may also acquire and combine user profile data from third-party sources. This results in a layered aggregation model, where multiple entities contribute to and exploit increasingly comprehensive user profiles.

The outcome is a data ecosystem in which:

The widespread use of highly addictive and manipulative technologies embedded in AI-infused applications, social media platforms, and AI chatbots has contributed to a growing global technology addiction crisis. This crisis affects adults, teens, and children alike and has been associated with increases in anxiety, depression, violence, online bullying, self-harm, public discord, political polarization, and suicide.

At some point, the question needs to be asked:

"If addiction, harm, mental health decline, exploitation, and the loss of life among teens and children are not the line at which government, advertisers, and Big Tech change their business models, then what is?"

Individuals who access the internet through devices supported by Android, iOS, or Windows operating systems are subject to large-scale data collection and profiling within Google's global advertising ecosystems supported by the AdTech AI-Quantum Control Platform (Core) that distributes targeted ads to billions of people around the world 24x7/365 days per year.

Google's ecosystem—often described as the AdTech Core and ecosystem of micro cores—aggregate user data to enable the delivery of targeted advertising across international markets. Through a network comprised of over 35,000 data brokers, developers, advertisers, PR agencies, developers, and platform providers, user information can be distributed and utilized across multiple jurisdictions worldwide, including those in China and Russia.

This includes regions with varying regulatory standards, raising important questions about:

These platforms operate through interconnected advertising infrastructures—sometimes referred to as “micro AdTech ecosystems”—that support real-time bidding, audience targeting, and behavioral profiling at planetary scale.

Through biometric data, individuals can potentially be identified and located—even without carrying their personal device—using technologies such as facial recognition and voice pattern analysis. These capabilities can operate through nearby connected devices, cameras, or other networked products within close proximity via Google's AdTech Core and global ecosystem, for advertisers this is the greatest system every created, for military use it is the greatest targeting system on the planet.

Global advertising technology (AdTech) infrastructures—originally developed for commercial targeting—are increasingly being examined in the context of national security and information operations.

Large-scale AdTech platforms and their surrounding ecosystems enable capabilities such as:

These same capabilities may be leveraged beyond commercial use cases. Governments and state-aligned actors have been documented utilizing data-driven platforms and digital ecosystems for purposes including:

This convergence of commercial data infrastructure and government use cases is often described as civil–military fusion, where technologies developed in the private sector are adapted for national security and strategic operations.

Chinese and Russian civil military programs including those in the U.S. involving Palantir Technologies Gotham Intelligence and Military Core pose massive threats to privacy, security, and safety to everyone, including teens and children, connected to the internet by way of any device or services supported by Android, iOS, or Windows.

Summary of Harms Caused by Targeted Advertising Rooted in Surveillance Capitalism

Tech addiction cannot be compared to alcohol, drug, or tobacco addiction.

Addictive brain hijacking, manipulative advertising, and AI indoctrination combined equals military grade brainwashing technology far more dangerous than subliminal advertising technology banned in the 20th century.

Addictive design is equally harmful to adults as it is to teens and children and should be banned.

Engineered engagement loops create dependency, addiction, and loss of behavioral control.

Linked to anxiety, depression, loneliness, self-harm, suicide, online harassment, and social isolation.

AI and algorithmic systems influence perception, decision-making, behavior, and beliefs without meaningful awareness or consent.

Nation-states and adversaries exploit social media, AI systems, and consumer apps to conduct psychological and cognitive warfare at scale.

Government and platform alignment raises concerns regarding mass surveillance, civil liberties, and individual autonomy.

Phones, computers, and connected products have evolved into always-on surveillance and data-extraction systems.

Consumers must accept non-negotiable terms of service with no meaningful opt-out, negotiation, or informed consent.

Complex and fragmented terms of service obscure actual surveillance, data collection, and monetization practices.

Embedded tracking technologies enable multi-entity surveillance, hidden data sharing, and limited user visibility.

Consumers generate valuable data, pay for devices and connectivity, yet receive no compensation while platforms profit from their behavior.

Thousands of data points are used to create highly detailed profiles containing personal, business, biometric, health, behavioral, and location information.

Digital profiles can be used for pricing manipulation, opportunity restriction, bias, and unequal access to services.

Continuous monitoring across home, work, medical, legal, and social environments erodes fundamental rights at scale.

Control of digital ecosystems by a handful of technology companies concentrates economic power, market access, and control over personal data.

The current model persists because it is highly profitable.

This is not a failure of awareness—it is a failure of incentives and enforcement.

EBOR addresses the structural causes of these harms through:

Most policy proposals address only one layer of the problem.

EBOR addresses:

Because if the data is compromised, the intelligence built upon it is compromised.

The Electronic Bill of Rights calls upon lawmakers, regulators, businesses, critical infrastructure operators, tech safety advocates, privacy advocates, and consumers to recognize a simple reality:

Privacy, security, safety, civil liberties, human rights, and NILD sovereignty are not optional features—they are fundamental rights.

Together, these harms highlight the need for an Electronic Bill of Rights (EBOR) to restore privacy, security, safety, civil liberties, human rights, data ownership, and informed consent in the AI and Quantum Digital Age.

Non-Enforcement of Existing Consumer, Child Protection, Privacy, and National Security Laws Beyond the Scope of Section 230

For decades, telecommunications, consumer protection, child safety, and privacy laws have established clear legal obligations regarding the protection of user data, confidentiality, and the lawful use of communications infrastructure.

Yet today, consumers—including minors—are routinely subjected to pervasive surveillance and data mining through smartphones, applications, operating systems, and connected products, despite the existence of these established legal safeguards.

These practices are often justified through terms of service and privacy policies. However, EBOR asserts that contractual consent does not override statutory law, particularly when services are essential to modern life.

Telecommunications infrastructure—including wireless, landline, fiber, and satellite—is regulated under a public interest framework, not a purely commercial one. The use of this infrastructure for surveillance-based business models raises serious legal and constitutional questions.

47 U.S.C. S 222

Telecommunications carriers are legally required to protect the confidentiality of customer data, including:

Carriers do not own this data—they are custodians with a duty to protect it.

47 C.F.R. SS 64.2001–64.2011

These rules implement Section 222 and require:

These are enforceable regulatory obligations, not optional guidelines.

3. Unjust and Unreasonable Practices

47 U.S.C. S 201(b)

It is unlawful for telecommunications providers to engage in practices that are unjust or unreasonable.

The misuse of customer data—especially at scale—may fall within this prohibition.

47 U.S.C. S 202(a)

This statute prohibits discriminatory or coercive practices in telecommunications services, including conditions that unfairly burden consumers.

Telecommunications carriers operate under a legal requirement to serve the:

“Public interest, convenience, and necessity.”

This establishes a public trust obligation—meaning infrastructure cannot be used solely for exploitative commercial purposes at the expense of consumers.

FTC Act – 15 U.S.C. S 45

Prohibits:

This applies directly to digital platforms, apps, and data-driven services.

This law is designed to address national security risks posed by applications and platforms that are owned, controlled, or influenced by foreign adversaries.

Key Provisions:

Consumers are told: “You agreed to it.” But in reality:

This raises a fundamental question:

Is consent valid when participation in modern society requires acceptance?

At both the state and federal level, a consistent pattern has emerged:

The result:

Incomplete legislation + weak enforcement = systemic backdoors for exploitation

Surveillance and data mining conducted through phones, computers, and connected products by any company—and especially by companies based in adversarial nations—extends far beyond privacy concerns and poses significant national security risks.

It impacts:

Key concerns include:

The Electronic Bill of Rights is based on three fundamental legal principles:

Terms of service cannot waive federally protected rights.

Coerced or conditional consent is not valid consent.

Licensed spectrum and regulated networks are not private data-extraction systems.

The issue is not the absence of law.

The issue is:

EBOR exists to:

The Electronic Bill of Rights calls on:

to recognize and act on the following reality:

Privacy, security, and safety are not optional features. They are legal rights.

This material is provided for informational and educational purposes only and does not constitute legal advice. Readers should consult qualified legal counsel for advice regarding specific legal matters.

Restoring Privacy, Security, and Consumer Protection Under Existing Law**

Electronic Bill of Rights Framework for Clean Data Business Practices Are Available Upon Request.

Electronic Bill of Rights Framework for Clean Data Business Practices

Article I: Right to Data Privacy

Article II: Right to Data Security

Article III: Right to Digital Anonymity

Article IV: Right to Be Forgotten

Article V: Right to Opt-Out of Data Monetization

Article VIII: Right to Digital Freedom and Free Speech

Article IX: Right to Own and Control Digital Identity

Article X: Right to Decentralized and Open Internet

Article XI: Right to Protection from Corporate and Foreign Surveillance

Article XII: Right to National and Consumer Security and Safety

Article XIII: The Abolishment of Web Scraping, Web Crawling, and Web Tracking

Article XIV: The Right to Accountability from Tech Giants

Article XV: The Right to Safe, Secure, and Private Preinstalled Apps & Technology

Article XVI: The Right to Safe Technology

Article XVII: The Right to Influencer and Bot Transparency

Article XIX: Freedom from Addictive, Divisive, and Manipulative Technology

Article XX: Freedom from Government & Tech Collusion

Article XXI: Right to Data Collection Transparency

Article XXII: Freedom from Indiscriminate Surveillance and Data Mining

Article XXIII: Freedom from Forced Participation by Way of Legal Agreements

Article XXIX: Freedom to Control Technology and Connected Products

Article XXX: The Right to Transparent Legal Language & App Permissions

Article XXXI: Ban on Teen Acceptance of Legal Agreements

Article XXXII: Right to Transparent AI and Algorithmic Accountability

Article XXXIII: Right to Fair Terms, Conditions & Legal Consent

Article XXXIV: Anti-Trust Protections & Internet Centralization

Article XXXV: National, Internet, and Technology Safety

Article XXXVI: The Right to Sue and Hold Tech Giants Accountable

Article XXXVII: Prohibition on AdTech Surveillance Infrastructure in Government and Civil-Military Programs such as the U.S. Government and Palantir Technologies.

Article XXXVIII: Equal Protection for Digital Currency, Financial, Biological, Medical, Legal, and AI–Quantum Platforms

All rights, protections, and enforcement mechanisms established under the Electronic Bill of Rights shall apply equally to all essential digital services and emerging technologies, including but not limited to:

These protections shall extend across all layers of the digital ecosystem, ensuring that no platform, technology, or service is exempt from accountability due to its classification as “emerging,” “experimental,” or “innovative.”

Universal Applicability to AI-Infused Digital Services

The combined Articles of the Electronic Bill of Rights shall apply in full to all AI-infused applications, platforms, chatbots, and digital services, regardless of delivery model, including:

No developer, platform provider, or technology company shall bypass or dilute these protections through terms of service, contracts of adhesion, or technical architecture.

Future-Proofing Consumer Protection

As technology evolves, all new categories of essential digital services shall automatically fall under the protections of the Electronic Bill of Rights without requiring additional legislation.

This ensures that innovation does not outpace consumer protection, and that human rights, data sovereignty, and individual autonomy remain preserved in the age of AI and quantum computing.

My name is Rex M. Lee and I am the author of the Electronic Bill of Rights, I have over 35 years of experience in telecommunications, cybersecurity, and technology, including serving as a senior executive.

As a result, when I write or speak about technology, telecommunications, and cybersecurity, I do so from firsthand experience.

I helped launch the world’s largest legal hacking firm, Houdinisoft, which was adopted by Verizon, Cricket (AT&T), MetroPCS (T-Mobile), and other global mobile network operators, as well as used for digital forensics.

I specialize in threats posed by Surveillance Capitalism, tech addiction, AI indoctrination, tech-based hybrid warfare, and civil-military fusion programs in Russia, China, and the United States.

In 2017, after advising Congress regarding the Facebook-Cambridge Analytica scandal, I was asked by Senator Ted Cruz’s office to author a congressional policy change proposal for digital rights from a developer’s perspective rather than from tech lobbyists working for Big Tech.

My initial digital rights proposal targeted the root cause of tech addiction, harm, and loss of privacy. It centered on banning Surveillance Capitalism, which would restore privacy, security, and safety to consumers of smartphones, computers, and connected products. Yet, my proposal was rejected by the U.S. Senate because there is no profit in solving the problem—especially by addressing targeted advertising, which fuels tech addiction and the loss of privacy.

I stopped advising Congress after the Instagram-Facebook whistleblower hearing involving Meta product designer Frances Haugen because the hearings became little more than puppet shows for grandstanding lawmakers who publicly claim they want to solve the problem while simultaneously taking money from the U.S.-China tech lobby. After each hearing, it became business as usual in Silicon Valley.

Aside from the harms caused by tech addiction, there are numerous other harms driven by targeted advertising rooted in Surveillance Capitalism (see harms below).

Addressing tech addiction alone will not restore privacy, security, or safety to consumers of smartphones, computers, and connected products powered by Android, iOS, or Windows.

Advertisers are equally—if not more—responsible for fueling tech addiction, manipulation, harm, and death among end users of AI-infused apps, social media platforms, and AI chatbots, including adults, teens, and children.

As a matter of fact, an entire multi-billion-dollar privacy industry has emerged in which so-called tech safety and privacy advocates make millions of dollars through books, documentaries, paid network TV and podcast appearances, interviews, and speaking fees.

While these efforts may raise awareness, many of these advocates refuse to fully endorse banning Surveillance Capitalism because this predatory, exploitative, and harmful business model fuels the very industry from which they profit.

Many of these so-called advocates, refuse to hold advertisers responsible for fueling the tech addiction, harm, and death, while refusing to hold Google, Apple, or Microsoft responsible for distributing highly addictive AI infused apps, social media platforms, gaming platforms, and AI chatbots that induce AI indoctrination, known as The Eliza Effect.

Without the continuation of Surveillance Capitalism, many of these paid advocates and attorneys would no longer have careers built around addressing the symptoms of a problem they refuse to eliminate at its root cause, Surveillance Capitalism.

Rex M. Lee: Rlee@ElectronicBillofRights.com